AI hallucinations: How to avoid the trap

- Rock Consultancy

- Apr 14

- 3 min read

April 2026

Intro:

AI hallucinations continue to make the headlines. While AI hallucinations may just cause some minor embarrassment in the workplace, possibly get you in trouble in court, it can, as we have seen in the case of the senior journalist Peter Vandermeersch, lead to suspension from a high-profile role. GenAI can be so convincing, it can lead to unfortunate outcomes.

While we all learn to navigate AI, it's a cautionary tale not to become complacent. While many may be forgiven for not having the necessary AI literacy when using GenAI tools, that leeway will fade. It's important for all organisations to have an AI (including GenAI) policy, for personnel to be familiar with it, and to understand the pitfalls of AI.

Details:

In early March 2026, a senior journalist, Peter Vandermeersch, was suspended by Mediahuis, for his use of GenAI in writing articles, where he admitted to putting “words in people’s mouths”.

According to media reports, Vandermeersch's suspension came after multiple quotes in his articles could not be verified. It was discovered that the quotes in question were fictitious, generated using AI tools. He responded that he “fell into the hallucination trap”. Hallucinations can be difficult to spot because inaccurate or false information generated by AI tools are presented as facts and presented very convincingly.

Mediahuis have maintained that they have a strict policy around the use of AI, adding the importance of diligence, human oversight and transparency. However, in this particular case, those rules were not followed.

In December 2025, a US court imposed $96,000 in sanctions for unprofessional use of AI—3 fillings contained 15 non-existent cases and 8 fabricated quotes that falsely represented real legal authorities—one of the highest sanctions of its kind. $14,000 was imposed on the local counsel for failure to review the documents. The client's case was dismissed. The court describes the improper behaviour as “a notorious outlier in both degree and volume”, emphasising the level of damage AI hallucinations can cause.

The message is abundantly clear: AI may generate, but AI has no professional and legal obligations or responsibility. People do. Verification is mandatory.

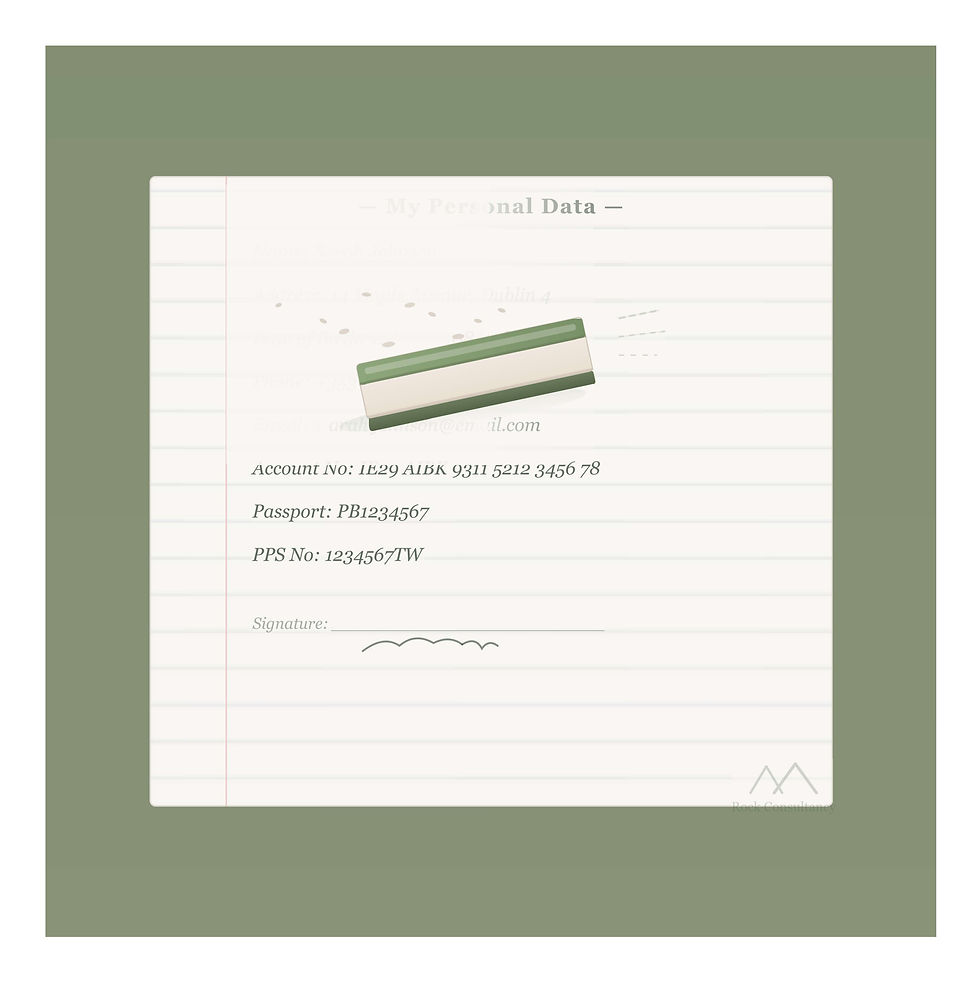

AI may not always provide accurate information and may provide fabricated output. Personnel need to be AI literate. While AI literacy is a requirement under the EU AI Act, it is also imperative for the successful adoption of AI in every organisation.

How can organisations manage challenges around AI in the workplace?

An AI Policy needs to be in place and be subject to regular view.

All personnel must be familiar with an organisation's AI Policy and understand the potential ramifications for not following it.

AI literacy is integral for successful AI adoption.

Human oversight requirements need to be documented and made clear for each AI tool.

Key Takeaways:

Policies: Existing data, security and acceptable use policies and procedures need to be reviewed and updated where needed. An AI policy needs to be introduced, which includes what's permitted and what's not, and ‘human in the loop’ provisions.

Policy Implementation: AI policies need to be effective and operationalised.

Adequate Training: AI literacy is not an option. It is a MUST. Employers must ensure that their personnel have adequate training for the appropriate use of AI.

Further Reading:

EU AI Act - Regulation (EU) 2024/1689 of the European Parliament and of the Council (Artificial Intelligence Act)

Irish Times: Mediahuis suspends senior journalist after admission of using AI material in his work

Couvrette v. Wisnovsky

At Rock Consultancy, we can support your organisation by creating AI policies, AI governance structures, and training your personnel.

For any queries on this article or how Rock Consultancy can support your organisation, please contact us at info@rockconsultancy.ie